With AI, Ship Less.

A PM Who Ships Nothing Will Outperform the PM Who Ships Everything.

Two PMs Walk Into a Product Review

First PM presents her quarter. 11 features shipped. New recommendation engine. Redesigned onboarding. Push notification system. Loyalty points. Saved addresses. Dark mode. Quick reorder. Referral program. Three more I can’t remember. The slide has a long list and a big number at the top.

Applause. “Great velocity.” 👏

Second PM presents. 2 features shipped. One: a simplified checkout that reduced cart abandonment by 18%. Two: a reorder flow that increased order frequency by 23% in the test group.

Polite nods. “Anything else in the pipeline?” 👀

Here’s the thing.

The first PM shipped 11 features. Nine of them have less than 5% adoption. Two of them created support tickets. One conflicts with a feature another team shipped the same quarter.

Total business impact: unclear and probably negative when you count the maintenance cost.

The second PM shipped 2 features. Both moved a KR. Both have clean adoption curves. Both are low-maintenance because they were tested and iterated before launch.

Who’s the better PM?

You already know the answer. But your org probably rewards the first one.

Shipping Is No Longer the Bottleneck

There was a time when shipping was hard where engineering cycles were scarce, and getting something from idea to production took months - Do you remember?!.

The PM who could ship more was, by definition, creating more value. Because the constraint was output.

AI broke that constraint.

When a PM can prototype in an afternoon. When an engineer can build a tested feature in days instead of sprints. When the cost of “let’s just ship it and see” approaches zero. The bottleneck moves.

The bottleneck is no longer “can we build this?” It’s “should we build this?”

And most PMs haven’t made that shift. They’re still optimizing for velocity. Shipping more features. Filling the change-log. Counting releases.

But when building is cheap, shipping is not the signal because shipping the right thing is the signal. And shipping the wrong thing is now cheaper to do, which means it happens more often, which means the cost of bad decisions compounds faster than ever.

Think about the Real Cost of Shipping

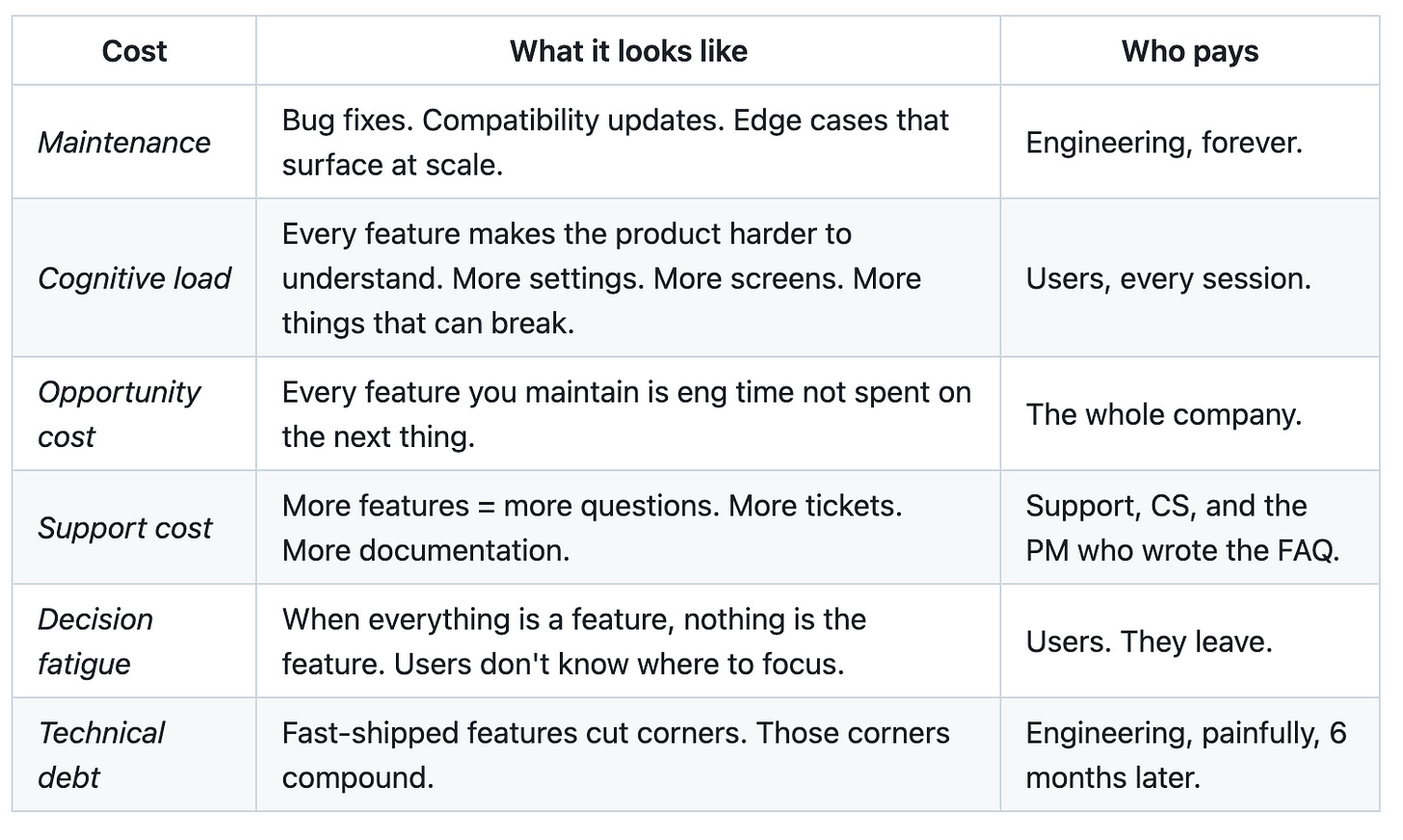

Every feature you ship has a price tag (RCS) that extends far past the build.

Adding a feature is easy but removing one is nearly impossible.

Every feature is a one-way door disguised as a two-way door.

The PM who ships 11 features just added 11 lines to the maintenance budget. 11 sources of support tickets. 11 things that can conflict with future features. 11 things that make the product slightly harder to understand.

The best product decisions are often “no.”

But “no” doesn’t show up in a sprint review. “No” doesn’t make the changelog. “No” doesn’t get applause in a product review.

That’s a culture problem, not a PM problem.

But the best PMs fight that culture anyway.

What the Best PMs Do Instead

The PM who ships 2 features and moves 2 KRs didn’t get lucky. She followed a different process.

1. She killed ideas early.

Before anything got to a sprint, she ran it through a 48-hour validation. Prototype or fake door test. If users didn’t engage, the idea died on Wednesday, not after 3 sprints of eng time.

Most PMs validate ideas after building them. That’s like checking if someone wants to buy your house after you’ve already built it.

2. She defined “done” as impact, not deployment.

“Shipped” isn’t done. “Users changed their behavior” is done. The checkout simplification wasn’t marked complete when it hit production. It was marked complete when cart abandonment dropped 18% and held for 4 weeks.

This changes how you scope features. When “done” is a metric, you build the minimum thing that moves the metric. Not the maximum thing your engineer is excited about.

3. She said no and explained why.

Her stakeholders brought her 14 feature requests that quarter. She built 2. But she didn’t ignore the other 12. She responded to each one with data: “We tested a version of this. Here’s what happened. Here’s why it didn’t make the cut.” That’s harder than saying yes. And it builds more trust.

4. She measured the features she didn’t ship.

The requests she killed? She tracked what happened without them. Did the problem get worse? Did customers churn because of the gap? Did a competitor ship it and win users?

In 11 out of 12 cases: nothing happened. The feature nobody built was the feature nobody missed and this data reinforced her judgment for next quarter.

Restraint Filters

A simple tool for deciding what not to ship. Before any feature makes it to a sprint, run it through these 4 filters.

Filter 1: Behavior Test

“Will this change user behavior, or just add a capability?”

Adding a “share” button adds a capability. But if nobody shares, it just adds a button. The question isn’t “can users do this?” It’s “will users do this?” If you can’t articulate which user, in which context, would use this feature every week, it probably shouldn’t exist.

Filter 2: Removal Test

“If we shipped this and removed it 3 months later, would anyone notice?”

Please Pls Pls Be honest. Most features would disappear without a single support ticket. If the answer is “nobody would notice,” you just answered whether it should be built.

Filter 3: Maintenance Test

“Am I willing to maintain this for 3 years?”

Because that’s roughly how long features live. You’re not deciding to build a feature. You’re deciding to support a feature for 3 years. Does it still feel worth it?

Filter 4: Metric Test

“Which KR does this move, and by how much?”

If a feature doesn’t connect to a KR, it shouldn’t be in the sprint. If it connects to a KR but you can’t estimate the impact, you need to validate before building. “It might help retention” is not a product strategy. “We tested it and it improved 7-day retention by 4% in the test group” is.

Any feature that fails 2 or more of these filters gets killed. Not backlogged. Killed.

Common Mistake PMs Make, We All DO

Measuring themselves by output instead of outcome.

It’s natural. Output is visible. You can count features. You can list them on a slide. You can point to the changelog and say “look how much we shipped.”

Outcomes are harder to see and they take time to materialize. And they require you to admit that some of what you shipped didn’t matter.

But the PM who ships 2 things that work will always beat the PM who ships 11 things that exist. “Exist” is not a product strategy. “Works” is.

AI made it possible to ship more features than ever. It also made it possible to validate, test, and kill ideas faster than ever. The PMs who use AI to ship more will lose to the PMs who use AI to decide better.

The Line I Want You to Remember

When building is free, the scarce resource isn’t engineering time: It’s user attention.

Every feature you ship asks users to notice it, learn it, and decide whether to use it. Their attention is finite. Your feature list is not. That math only works if most of what you ship earns that attention.

The PM who ships nothing and learns everything will outperform the PM who ships everything and learns nothing. Not because shipping is bad. But because shipping without learning is waste. And waste, no matter how fast you produce it, is still waste.

With AI; Ship less. Learn more. Move the metric.

If this made you look at your feature velocity differently, share it with a PM who’s about to present a slide with 15 features shipped and zero metrics moved.

None of that is new, AI just accelerates. You always should have shipped features that make sense. You always needed to validate. You always needed to have a strategy.